In 2019, a hospital in Dallas sent us an eligibility check at 6:47 AM on a Monday morning. A patient had walked into the ER with abdominal pain. The admitting nurse needed to know — before ordering a CT scan that would cost the hospital $3,200 if the patient's insurance didn't cover it — whether the patient's plan was active, what the deductible looked like, and whether prior authorization was required for outpatient imaging.

Our system sent a 270 transaction to the payer. The payer's system was down. Not offline-down — worse. Slow-down. The kind of slow that doesn't trigger a health check failure but makes every request take eleven seconds instead of two hundred milliseconds. Our system waited. The connection pool filled. Other eligibility checks — for other patients, at other hospitals — started queueing behind the dead payer connection. Within four minutes, the service was returning timeouts to every client, not just the ones routing to the degraded payer.

One slow payer took down eligibility checks for all payers. A nurse in Dallas couldn't verify insurance. A scheduling coordinator in Memphis couldn't confirm coverage for a surgery the next morning. A billing team in Atlanta couldn't run their daily eligibility batch.

The system was doing exactly what it was designed to do. The problem was what it wasn't designed for.

This article designs an eligibility verification service from scratch — the kind of system that handles that Monday morning without blinking. It's the capstone of this architecture series, and it uses every concept from the previous six parts: the five forces framework from Part 1, the resilience patterns from Part 2, the shipping discipline from Part 3, the scaling stages from Part 4, the subsystem deep dives from Part 5, and the reference numbers from Part 6.

One system. Everything we've covered. Let's build it.

What the System Does

An eligibility verification service sits between healthcare providers (hospitals, clinics, physician offices) and insurance payers. The provider needs to know: is this patient covered? What benefits do they have? What will the patient owe out of pocket?

The transaction flow is standardized by HIPAA:

- The provider sends a 270 transaction (eligibility inquiry) — who is this patient, what is their member ID, what service are we checking coverage for?

- The payer responds with a 271 transaction (eligibility response) — active/inactive, coverage details, co-pay amounts, deductible status, prior authorization requirements.

Simple in concept. Brutal in practice. Here's why:

| Challenge | Reality |

|---|---|

| Volume | A mid-size health system runs 50,000–100,000 eligibility checks per day. Peak hours (7–10 AM) concentrate 40% of daily volume into three hours. |

| Latency requirements | The nurse at the front desk needs an answer in under 2 seconds. The patient is standing there. The waiting room is full. |

| Payer variability | Each payer responds differently. Some return rich benefit detail. Some return the bare minimum. Some take 200ms. Some take 8 seconds. Some go down on Tuesdays. |

| Batch + real-time | Real-time checks happen at the point of care. Batch checks run overnight — verify tomorrow's scheduled patients before the doors open. Same service, two access patterns. |

| Compliance | HIPAA governs every transaction. PHI in transit and at rest must be encrypted. Audit trails are non-negotiable. |

The Five Forces Applied

Before drawing any boxes, let's run this through the framework from Part 1:

Constraints — The team is six engineers. The timeline is twelve weeks to MVP. The budget allows for managed cloud services but not an enterprise integration platform. We're building on AWS.

Tradeoffs — We need sub-second responses for real-time checks but also handle overnight batch runs of 200,000 records. Caching helps real-time but is meaningless for batch. We need both paths.

Failure modes — The 2019 incident taught us the critical one: a slow payer must not affect checks routed to healthy payers. Payer isolation is the single most important architectural decision. Everything else follows from it.

Evolution — Today it's 270/271 eligibility. Six months from now, the business wants to add 276/277 claim status checks through the same service. The architecture must accommodate new transaction types without a rewrite.

Operational cost — Six engineers can't babysit a Kafka cluster. Every infrastructure choice must be managed or serverless. Operational simplicity isn't a preference — it's a constraint.

High-Level Architecture

The architecture has six components. Each one exists for a specific reason:

API Gateway — Rate limiting, authentication, TLS termination. Every request gets a correlation ID here that follows it through the entire system. This is your audit trail anchor.

Eligibility Service — The core. Receives the 270, checks the cache, routes to the right payer, transforms the 271 response, writes the audit record. Stateless — any instance can handle any request.

Cache Layer (Redis) — A patient's eligibility doesn't change every minute. A cache with a 15-minute TTL absorbs repeated checks for the same member. At peak hours, this cuts payer traffic by 60–70%.

Payer Router — The critical piece. Routes each request to the correct payer adapter based on the payer ID in the 270. Each payer gets its own circuit breaker, its own timeout, its own connection pool. This is how you prevent the Dallas incident — a slow Payer A does not touch the connection pool for Payer B.

Payer Adapters — One adapter per payer. Each handles the payer's specific quirks — endpoint URL, authentication method, response format variations, companion guide rules. The adapter translates between our canonical request format and the payer's specific 270/271 implementation. For payers with non-standard responses — and there are always payers with non-standard responses — an AI agent assists with response normalization, extracting benefit details from messy 271s that don't follow the companion guide cleanly. The agent proposes the parsed structure; a schema validator confirms it before it enters the cache.

Postgres — Audit trail, transaction history, payer configuration. Not in the hot path for real-time checks (the cache handles that). Primary for batch operations and reporting.

The Detail That Saves You: Payer Isolation

This is the architectural decision that matters more than everything else combined.

Every payer gets its own circuit breaker, its own connection pool, its own timeout configuration, and its own retry policy. A payer that is slow, degraded, or offline is isolated — its problems do not propagate to requests bound for other payers. This is the lesson from Part 2 (resilience) applied to a specific domain: the blast radius of a payer failure must be exactly one payer.

Here's what payer isolation looks like in practice:

| Config | Payer A (large national) | Payer B (regional) | Payer C (state Medicaid) |

|---|---|---|---|

| Timeout | 3 seconds | 5 seconds | 8 seconds |

| Circuit breaker threshold | 5 failures in 30s | 3 failures in 60s | 10 failures in 120s |

| Connection pool size | 50 | 20 | 10 |

| Retry policy | 1 retry, 500ms backoff | 2 retries, 1s backoff | No retry (too slow) |

| Fallback | Return cached (if < 24h) | Return cached (if < 4h) | Return "unable to verify" |

These aren't hypothetical numbers. They're calibrated from observing each payer's actual behavior over months. Payer A is fast but occasionally drops connections — a single retry with short backoff handles it. Payer C is a state Medicaid system that runs on infrastructure from 2008 — it's slow by nature, so the timeout is generous, but retrying a slow system just makes it slower, so retries are disabled.

Six months after deploying payer isolation, a major national payer pushed a code update on a Friday evening. By Monday at 7 AM, their eligibility endpoint was returning 500 errors on roughly 30% of requests and taking 12 seconds on the rest.

Our circuit breaker for that payer tripped at 7:02 AM. All requests to that payer started returning the most recent cached result (if available) or a structured "unable to verify — payer temporarily unavailable" response. The provider systems received a clear signal: try again later or verify manually.

Every other payer continued operating normally. Response times for the rest of the system didn't move. The monitoring dashboard showed one red payer tile and fifteen green ones. The on-call engineer got a single alert, confirmed the payer was the source, and went back to coffee.

Under the old architecture, that Monday would have been a four-hour outage for every provider on our platform. Under the new one, it was a four-minute Slack thread.

The Cache Strategy

Eligibility data has a natural caching profile: it changes infrequently (a member's plan doesn't shift between the 8 AM and 10 AM check) but it must be fresh enough to catch terminations, plan changes, and coverage lapses.

The cache key is a composite:

eligibility:{payer_id}:{member_id}:{service_type_code}:{date_of_service}

The TTL depends on the context:

| Scenario | TTL | Reasoning |

|---|---|---|

| Real-time check (point of care) | 15 minutes | Same patient being checked by multiple departments in the same visit |

| Batch pre-verification | 4 hours | Overnight batch results used for next-day appointments |

| Known stable member (continuous enrollment) | 1 hour | Members in stable employer plans rarely change mid-month |

| Recent change detected | 0 (no cache) | If a previous response indicated pending changes, always go to payer |

At 100,000 checks per day with a 15-minute TTL, the cache absorbs 60–70% of real-time requests. That's 60,000–70,000 fewer round trips to payer systems per day. The payers appreciate this too — they rate-limit aggressively, and a provider that hammers their API gets throttled or blocked.

Cache the full 271 response, not just the active/inactive flag. Providers need benefit details — deductible remaining, co-pay amounts, prior auth requirements. Caching only the status forces a fresh payer call whenever someone needs detail, which defeats the purpose.

Batch Path

The real-time path handles the nurse at the front desk. The batch path handles a different problem: the scheduling team that needs to verify eligibility for 3,000 patients with appointments tomorrow.

The batch path doesn't go through the API gateway. It runs as a scheduled job — typically at 2 AM — that pulls tomorrow's appointment list, groups patients by payer, and sends eligibility checks in controlled bursts.

Why bursts? Because payer rate limits. Send 3,000 requests at once and the payer blocks you. Send 50 per second with a 200ms delay between batches, and you stay under the radar while finishing the full run in about 90 minutes.

Batch scheduler (2 AM)

→ Pull tomorrow's appointments from EHR feed

→ Group by payer

→ For each payer:

→ Check cache first (skip if valid result exists)

→ Send eligibility checks at payer-specific rate

→ Write results to Postgres

→ Update cache with fresh results

→ Generate exception report (unverified, terminated, pending)

The exception report is the output that matters — and in 2026, it's no longer a raw list. An AI agent triages every exception before a human sees it. A member ID mismatch where the format changed? The agent flags it as a probable system migration and suggests the corrected format. A payer returning "inactive" for a member who enrolled three days ago? The agent recognizes the pattern — enrollment file processing lag — and marks it as likely to self-resolve within 48 hours, with a recommended recheck time. A coverage termination that contradicts the employer's 834 enrollment file? High priority, probable data conflict, needs human review now.

At 6 AM, the scheduling team opens a triaged, prioritized list — not a wall of undifferentiated failures. The AI handles the pattern recognition. The human handles the judgment calls. The other 2,850 patients? Already verified. No nurse has to wait at the front desk.

The Data Model

Four tables carry the system:

-- Payer configuration: endpoints, timeouts, auth, circuit breaker settings

CREATE TABLE payers (

payer_id TEXT PRIMARY KEY,

name TEXT NOT NULL,

endpoint_url TEXT NOT NULL,

auth_type TEXT NOT NULL, -- 'mutual_tls', 'oauth2', 'basic'

timeout_ms INT NOT NULL DEFAULT 3000,

max_connections INT NOT NULL DEFAULT 20,

circuit_breaker JSONB NOT NULL, -- threshold, window, fallback policy

companion_guide JSONB, -- payer-specific field mappings

active BOOLEAN DEFAULT true,

updated_at TIMESTAMPTZ NOT NULL DEFAULT now()

);

-- Every eligibility transaction, for audit and analytics

CREATE TABLE eligibility_transactions (

id UUID PRIMARY KEY DEFAULT gen_random_uuid(),

correlation_id UUID NOT NULL,

payer_id TEXT NOT NULL REFERENCES payers(payer_id),

member_id TEXT NOT NULL,

subscriber_id TEXT,

service_type TEXT NOT NULL,

date_of_service DATE NOT NULL,

request_raw BYTEA, -- encrypted 270

response_raw BYTEA, -- encrypted 271

response_status TEXT NOT NULL, -- 'active', 'inactive', 'error', 'timeout'

response_time_ms INT NOT NULL,

source TEXT NOT NULL, -- 'realtime', 'batch'

created_at TIMESTAMPTZ NOT NULL DEFAULT now()

);

-- Composite index for the queries that matter

CREATE INDEX idx_elig_member_date

ON eligibility_transactions (member_id, date_of_service DESC);

CREATE INDEX idx_elig_payer_created

ON eligibility_transactions (payer_id, created_at DESC);

CREATE INDEX idx_elig_correlation

ON eligibility_transactions (correlation_id);

-- Parsed benefit details from the 271 response

CREATE TABLE eligibility_benefits (

id UUID PRIMARY KEY DEFAULT gen_random_uuid(),

transaction_id UUID NOT NULL REFERENCES eligibility_transactions(id),

benefit_type TEXT NOT NULL, -- 'deductible', 'copay', 'coinsurance', 'out_of_pocket'

coverage_level TEXT NOT NULL, -- 'individual', 'family'

in_network BOOLEAN,

amount DECIMAL(12,2),

remaining DECIMAL(12,2),

period TEXT, -- 'calendar_year', 'plan_year', 'visit'

service_types TEXT[], -- array of service type codes covered

prior_auth_required BOOLEAN DEFAULT false,

notes TEXT

);

-- Batch run tracking

CREATE TABLE batch_runs (

id UUID PRIMARY KEY DEFAULT gen_random_uuid(),

run_date DATE NOT NULL,

total_checks INT NOT NULL,

cache_hits INT NOT NULL,

successful INT NOT NULL,

failed INT NOT NULL,

duration_ms INT NOT NULL,

started_at TIMESTAMPTZ NOT NULL,

completed_at TIMESTAMPTZ

);The request_raw and response_raw columns store encrypted 270/271 transactions. These contain PHI — member names, dates of birth, subscriber IDs. Encryption at rest is a HIPAA requirement, not an option. Use column-level encryption with keys managed through AWS KMS or equivalent. The application decrypts on read, never the database.

Note what isn't here: no member table. No patient demographics. The eligibility service doesn't own member data — it processes transactions and caches results. The member's identity comes from the provider's EHR system in the 270 request. Keeping the service focused on transactions rather than trying to be a member database is a deliberate boundary. It simplifies the data model, reduces the PHI surface area, and means the service doesn't need to sync member data from upstream systems.

Scaling the Numbers

Let's apply the estimation framework from Part 6 to size this system.

Starting assumptions:

- 50 provider organizations, growing to 200 in Year 2

- Average 2,000 real-time checks per provider per day = 100,000 daily real-time

- Batch: 50,000 per night (growing to 200,000)

- Peak concentration: 40% of daily volume in 3 hours (7–10 AM)

Real-time path:

| Metric | Calculation | Result |

|---|---|---|

| Daily real-time checks | 100,000 | — |

| Peak 3-hour volume | 100,000 × 0.4 = 40,000 | — |

| Peak QPS | 40,000 / (3 × 3,600) = 3.7 | ~4 rps |

| With 3x headroom | 4 × 3 | ~12 rps |

| Year 2 (4x growth) | 12 × 4 | ~48 rps |

| Cache hit rate (estimated) | 65% | — |

| Payer calls at peak (Year 2) | 48 × 0.35 = 16.8 | ~17 payer calls/sec |

This is not a high-throughput system by internet standards. Forty-eight requests per second would make a social media engineer laugh. But every one of those requests has a patient waiting, a dollar amount attached, and a compliance requirement behind it. The architecture isn't about handling millions of requests — it's about handling every single request reliably, with sub-second latency, even when a payer is having the worst day of its life.

Storage:

| Data | Size per record | Daily volume | Annual |

|---|---|---|---|

| Eligibility transactions | ~2 KB (with encrypted raw) | 150,000 (real-time + batch) | ~100 GB |

| Benefit details | ~500 bytes | 450,000 (avg 3 per transaction) | ~75 GB |

| Batch run metadata | ~200 bytes | 1 per day | Negligible |

| Total Year 1 | ~175 GB |

A single Postgres instance handles this comfortably. No sharding needed — possibly ever, unless the business scales to thousands of provider organizations. A primary with one read replica for reporting queries is the right starting architecture. Revisit if daily volume passes one million transactions.

Observability

Every eligibility check produces four signals:

-

Response time — broken down by payer. The dashboard shows each payer as a card with P50, P95, and P99 latency. When a payer's P95 drifts above their configured timeout, you know before the circuit breaker trips.

-

Cache hit rate — overall and per payer. A sudden drop in cache hit rate means either traffic patterns changed (new provider onboarded with different member populations) or the cache is being invalidated too aggressively.

-

Error rate — by payer, by error type (timeout, 500, malformed response, auth failure). The circuit breaker state is visible: closed (healthy), open (tripped), half-open (testing recovery).

-

Transaction audit trail — every 270 sent, every 271 received, every cache hit, every fallback invoked. Correlation IDs link a provider's request to the payer call to the response. When someone asks "what happened to the eligibility check for patient X at 9:14 AM on Tuesday," you can answer in thirty seconds.

Set an alert when any single payer's error rate exceeds 10% over a 5-minute window. This catches degradation before the circuit breaker trips (which typically fires at a higher threshold). The goal is to know about a problem before it affects enough requests to trigger automatic isolation — giving you the option to intervene manually if the situation is recoverable.

The AI Layer

Everything above — the payer router, the cache, the circuit breakers, the batch scheduler — is infrastructure that existed in 2019. Good infrastructure. Necessary infrastructure. But if you're designing this system in 2026 and the AI layer is an afterthought, you're building a museum.

AI transforms three parts of this service in ways that weren't possible two years ago:

1. Response normalization — the payer adapter's secret weapon

The 271 specification is standardized. Payer implementations of the 271 are not. One payer nests deductible information inside a benefit detail loop with service type code "30." Another puts it at the subscriber level with a different qualifier. A third returns free-text notes like "Ded $2500 remaining $847.23 INN only" in a segment that's supposed to contain structured data.

Before AI, you wrote a custom parser for every payer variation. You maintained a library of edge-case handlers that grew every month. When a payer changed their response format — and they do, without notice — a developer dropped what they were doing to fix the parser.

Now, an LLM agent sits inside each payer adapter. It receives the raw 271, understands what the payer meant regardless of how they structured it, and extracts a normalized benefit object. The agent handles the ambiguity. A deterministic schema validator handles the trust — every field the agent extracts is checked against expected types, ranges, and code sets before it's accepted. If the validator rejects the agent's output, the transaction is flagged for human review rather than silently producing bad data.

This is the pattern that runs through the entire design: AI proposes, rules dispose.

2. Anomaly detection — seeing what dashboards miss

Traditional monitoring catches threshold violations — error rate above 10%, latency above 3 seconds. AI catches pattern shifts that don't trigger thresholds.

A payer starts returning "inactive" for 12% more members than their historical baseline this week. The error rate hasn't spiked — the responses are technically valid. But an agent watching the data recognizes the anomaly and raises it: Payer B's inactive rate is 2.4 standard deviations above their 90-day mean. Possible causes: enrollment file processing delay on their end, plan year transition, or a data issue in their eligibility system. Recommend: contact payer operations before the exception volume hits the morning batch.

That alert at 11 PM saves the scheduling team from drowning in false "terminated" results at 6 AM.

3. Natural language benefit summaries

The raw 271 response is a wall of segment codes, service type qualifiers, and benefit amount loops. Translating that into something a front-desk coordinator can act on has traditionally required either deep EDI training or a custom UI that maps every code to a label.

An LLM generates a plain-language summary from the parsed benefit data:

Coverage: Active (Blue Cross PPO, Group #4471829) Deductible: $2,500 individual / $1,847.23 remaining CT scan (CPT 74177): Covered, in-network. Prior authorization required. Estimated patient responsibility: $320 co-pay after deductible applied. Note: Out-of-pocket maximum ($6,500) not yet reached.

The summary is generated from the structured data the validator already confirmed — the LLM isn't interpreting raw EDI, it's formatting verified facts into readable English. The compliance risk is near zero because the source data has already passed deterministic validation.

When adding AI to a healthcare system, the trust architecture matters more than the model. Every AI output must flow through a deterministic gate before it reaches a user or downstream system. The model handles ambiguity and language. The gate handles correctness and compliance. Separating these concerns is what makes AI safe enough for healthcare — and what most teams skip when they're in a hurry.

4. Payer onboarding — from weeks to hours

Onboarding a new payer used to mean: read their companion guide PDF (50–200 pages), identify how their 270/271 implementation differs from the base spec, write a custom adapter, test against their sandbox, iterate through certification. Two to four weeks of developer time.

An AI agent reads the companion guide, generates a draft payer adapter configuration — endpoint details, authentication method, field mapping overrides, known quirks — and produces a set of test cases that exercise the payer-specific variations. A developer reviews the generated config, runs the test suite, and adjusts. The agent did the tedious parsing. The developer did the judgment. Onboarding drops from weeks to days.

What This Design Doesn't Include (Yet)

A system design in one article means deliberate omissions. These are the things a production system would need that I'm choosing not to cover here — not because they don't matter, but because each one deserves its own treatment:

- FHIR translation layer — converting between X12 270/271 and FHIR Coverage/CoverageEligibilityRequest resources. As payers adopt FHIR APIs (CMS mandates are pushing this), the payer adapters need to speak both protocols. The AI layer we've built here makes this easier — the same normalization agent that handles non-standard 271s can map to FHIR resources — but the FHIR-specific validation and conformance testing is its own design problem.

- Member matching — correlating a member ID in a 270 with the same person across multiple payer systems when IDs don't match. Probabilistic matching with human review queues. An AI agent can score match candidates, but the deterministic gate here is especially critical — a false positive match means showing Patient A's benefits to Patient B's provider.

- Agent orchestration at scale — the AI capabilities described above use single-agent patterns. A full agent/sub-agent orchestration layer — where a coordinator agent delegates parsing, validation, anomaly detection, and summarization to specialized sub-agents running concurrently — is the production-grade evolution. Think of it as microservices for intelligence.

- Analytics layer — denial pattern analysis, payer performance benchmarking, coverage gap detection. With the transaction history and AI layer in place, an analytics agent can surface insights like "Payer B denies 23% of CT scan prior auths on first submission — recommend bundling clinical notes with the initial request." This is where the data becomes strategic rather than transactional.

Each of these is a system design in its own right. The eligibility service we've designed here is the foundation they'd all build on.

The Series, Complete

This is Part 7 — the final part of the solution architecture series.

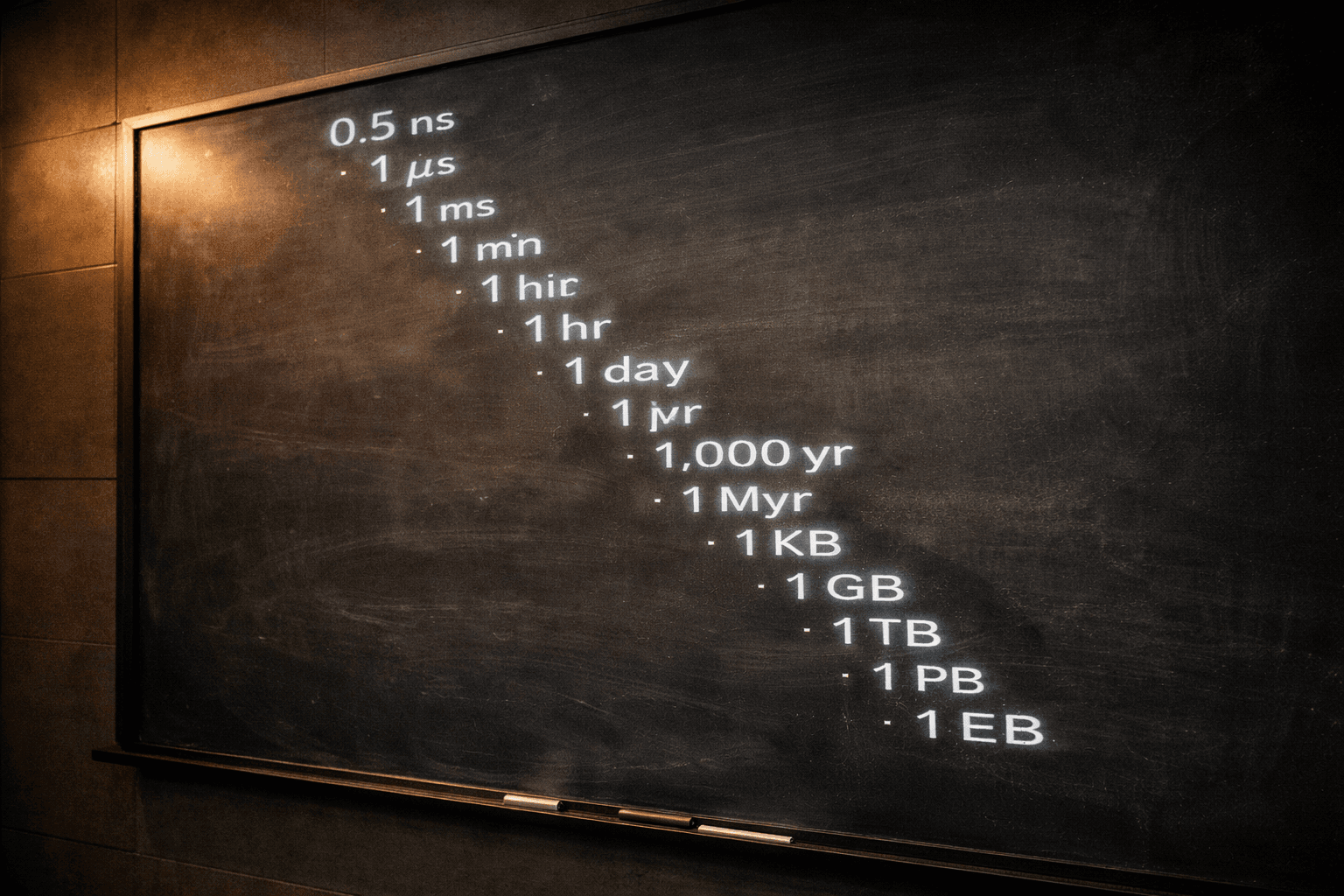

We started with the architecture nobody notices — the framework, the five forces, the argument that restraint is the most undervalued skill in system design. We walked through what happens when everything breaks — circuit breakers, bulkheads, the resilience patterns that determine whether a failure is a blip or a catastrophe. We covered the discipline of architecture that ships — MVPs, CI/CD, the build-versus-buy decision. We opened the engine room with the numbers behind the architecture — the full scaling journey from 50 requests per second to 500,000, with the actual queries and configs. We went deep on the subsystems that hold — load balancers, CDN edge caching, monitoring. We built the reference card with the numbers every architect should know — the latency numbers, availability math, and estimation framework that every architect should carry into a design review.

And here, in Part 7, we put it all together. One system. One domain. Every concept from the series applied to a real design problem — the kind of system that sits between a nurse and an insurance company and has to work every single time.

The architecture that matters is the one that lets a nurse in Dallas verify a patient's insurance at 6:47 AM on a Monday without knowing or caring that a payer in Connecticut is having the worst morning of its fiscal year.

That's the whole series in one sentence. Build systems that let the people who depend on them stop thinking about the plumbing. Make the architecture disappear. Let the nurse do her job.

If you've followed along from Part 1, you now have the mental model, the resilience toolkit, the shipping discipline, the scaling playbook, the subsystem knowledge, the reference numbers, and a complete design to tie them together.

Build well. Sleep soundly.