A system I helped architect in 2016 started as a single API behind an Elastic Load Balancer, a Postgres database, and a Redis cache. Fifty requests per second on a good day. The team was four engineers. The customers were internal — a few hundred operations staff using a dashboard that pulled reports and processed approvals.

Nobody was excited about any of it.

The tech lead at the time — one of the sharpest engineers I've worked with — actively resisted making the architecture more interesting. When someone proposed event sourcing for the audit trail, she asked how many audits they'd actually need to replay. The answer was maybe two a year, manually, by one person. "A table with timestamps will do," she said. When someone else suggested breaking the monolith into services for "separation of concerns," she pointed out that the team was four people and the concerns weren't actually separate — every feature touched users, approvals, and notifications. Splitting them into services wouldn't separate the logic. It would scatter it.

She had a phrase I still think about: "Don't solve problems you haven't earned yet." It sounded like laziness if you weren't paying attention. It was discipline.

By 2021, that same system was serving five thousand requests per second. The customer base had expanded from internal ops to external partners via a public API. The database had grown from one instance to a primary with three read replicas. The single API had split into two services — one for reads, one for writes — behind the same load balancer. Redis had gone from a nice-to-have to the thing that kept the database from melting.

Every one of those changes came from a real constraint, not a roadmap. Read latency climbing past the budget they'd set — add a replica. Write throughput saturating during month-end processing — split the read and write paths. Cache hit rates dropping because the access pattern had shifted — rethink the cache keys. Each change was the smallest intervention that bought the next six months of headroom. No grand re-architecture moment. No whiteboard session where someone drew the "future state" diagram. Just a team paying attention to what the system was telling them and responding with restraint.

The system survived because the people who built it had the patience to let the problems reveal themselves before solving them. They resisted the pull of interesting. They chose boring, repeatedly, knowing that boring compounds into something remarkable if you give it enough time.

Compare that with a system I inherited around the same time. Different company, similar traffic projections. The original team had designed for ten thousand requests per second from day one. They'd built a Kubernetes cluster with auto-scaling, a Kafka-based event pipeline for what was essentially request-response traffic, a service mesh with Istio, and a custom API gateway — all before they had their first paying customer.

When I arrived, they had twelve microservices and forty requests per second. The Kafka cluster cost more per month than the engineering team's coffee budget. Two of the twelve services had never processed a single production request. The Istio sidecar proxies consumed more memory than the application containers they were proxying.

They had built for scale they didn't have, with complexity they couldn't afford. Every modification touched infrastructure that existed for a future that hadn't arrived. The system was harder to change, not easier — and the team was exhausted, spending most of their energy operating the architecture rather than building the product.

The first system scaled because it was built by people who understood that restraint is a skill. The second system couldn't scale because complexity had already consumed the team's capacity to adapt. The architecture that works is the one that leaves room for the team to think — room that disappears fast when every deploy is a coordination exercise across eight repositories.

Architecture that scales well is almost always architecture that started simple and grew in response to measured constraints. The premature abstraction is more dangerous than the premature optimization — and both are more dangerous than the humble monolith that just needs a read replica.

A Framework for Thinking About Systems

Before we get into specifics, I want to lay out a mental model that I come back to on every system I design. It's not original — bits of it are borrowed from years of reading, building, and watching things break — but having it as a framework helps me ask the right questions before I start drawing boxes and arrows.

Every system design decision lives at the intersection of five forces:

Constraints — what the team, timeline, budget, and business reality actually allow. A five-person team with a six-week deadline lives in a different universe than a platform team with a two-year horizon. The constraints aren't limitations to work around. They're the shape of the solution.

Tradeoffs — what you're giving up to get what you need. Every architectural choice is a tradeoff, and the discipline is in making tradeoffs consciously rather than accidentally. Choosing eventual consistency buys you availability and partition tolerance, but the team needs to understand what "eventual" means in their domain. Choosing a monolith buys you simplicity and speed, but you accept that extraction into services later will cost more than starting with them.

Failure modes — how the system breaks when (not if) something goes wrong. The question isn't whether your database will go down. It's what happens to the user experience when it does. Does the system degrade gracefully, showing cached data and queuing writes? Or does it show a blank screen? The failure mode you design for is the failure mode you get. The ones you don't design for are the ones that wake you up at 3 AM.

Evolution — how the system changes over time as requirements shift, traffic grows, and the team learns. The system you build in month one will look different in month eighteen, and the architecture should make that evolution cheap rather than expensive. This is why reversibility matters. One-way doors — decisions that are expensive to undo — deserve deliberation. Two-way doors deserve speed.

Operational cost — what it takes to keep the system running day to day. The architecture that looks elegant on a whiteboard but requires a dedicated engineer to babysit the message broker has a hidden tax that compounds every month. Operational cost includes monitoring, on-call burden, deployment complexity, and the cognitive load of understanding how the pieces fit together.

These five forces pull in different directions, and the art of system design is finding the balance point for your specific context. There's no universal right answer — there's only the right answer for this team, this problem, this moment. What I've found is that teams who name these forces explicitly make better decisions than teams who navigate them by instinct. Instinct works until the system gets complex enough that no one person holds the whole picture in their head.

We'll expand this framework in later parts of the series — applying it to resilience decisions, shipping discipline, and the emerging patterns of AI-native architecture. For now, keep these five forces in mind as we walk through the specifics of scaling and performance. Every decision in the sections that follow is a negotiation between them.

Think Service, Not Server

The single most important architectural principle for systems that need to scale is deceptively simple: don't let your application remember things between requests.

Stateless design means any instance of your application can handle any request. No sticky sessions. No in-memory state that matters. The user's session lives in Redis or a database. The file upload goes to S3, not local disk. The background job state lives in a queue, not in a thread's memory.

This sounds obvious until you watch a team discover, during a 3 AM incident, that their "stateless" application stores temporary calculation results in a class-level dictionary that persists between requests. Or that their WebSocket connections assume the same server will handle all messages in a conversation. Or that their file processing pipeline writes intermediate results to /tmp and assumes the next step will run on the same instance.

When your application is truly stateless, scaling becomes a capacity problem rather than an architecture problem. You add instances. The load balancer distributes. Done.

Here's what a well-designed request lifecycle looks like — each layer does one thing, and no layer assumes it knows which instance handled the previous request:

The CDN handles static assets and cacheable responses. The load balancer routes to any healthy instance. The application processes the request without caring which instance it is. The cache absorbs repeated reads. The database is the source of truth.

Every component in this chain can be replaced, scaled, or failed-over independently because none of them hold state that another component depends on. The CDN can be Cloudflare today and Fastly tomorrow. The load balancer can shift traffic between regions. App instances can be terminated mid-request and the retry goes to another instance without the user noticing.

That independence — each layer doing one thing and holding no assumptions about the others — is what makes the system scalable. The architecture disappears when it works, which is exactly the point.

The Scaling Playbook

Scaling decisions should be responses to measured bottlenecks, not predictions about hypothetical ones. But when a bottleneck arrives, it helps to have a mental model for where to look and what to try.

Most scaling problems fall into a handful of categories, and each category has a relatively predictable set of interventions:

The key insight is that bottlenecks shift. You add a cache layer and the database reads drop by 80 percent — great. Now the writes are the bottleneck because the cache absorbed all the read load that was previously slowing down the write connections too. You add read replicas and discover that the primary is spending most of its time on replication rather than serving writes. You scale the app tier horizontally and find that the connection pool to the database is now the constraint.

This is normal. Scaling is iterative. Every intervention reveals the next bottleneck, and the right response is to measure again, not to pre-emptively solve problems you haven't measured yet.

Horizontal scaling — adding more instances of the same thing — is almost always preferable to vertical scaling — making one instance bigger. Horizontal scales further, fails more gracefully (losing one of twenty instances is a 5 percent capacity reduction; losing your one big instance is a total outage), and aligns with cloud-native pricing models.

The exception is databases. Vertical scaling of a database primary is often the right move because horizontal scaling of writes is fundamentally hard. Sharding introduces routing complexity, cross-shard query limitations, and rebalancing headaches. Vertical scaling the primary buys time without introducing that complexity, and time is what you need to decide whether sharding is actually necessary or whether write optimization — better indexes, batch operations, async writes — can close the gap.

AI-driven capacity planning is becoming genuinely useful here. Tools that analyze historical traffic patterns and correlate them with infrastructure metrics can predict scaling needs hours or days before they become incidents. The value isn't in the prediction itself — any ops team can look at a dashboard and see traffic climbing — but in the correlation of multiple signals that humans tend to miss: memory pressure climbing while CPU stays flat, connection pool utilization creeping up only during specific query patterns, or cache hit rates dropping because a recent deploy changed access patterns.

The fundamentals haven't changed, though. Measure. Identify the bottleneck. Apply the smallest intervention that buys headroom. Measure again.

Data Is the Architecture

If "Systems That Last" argued that your data model is your architecture, this is the operational corollary: your data tier strategy determines your scaling ceiling.

Most systems have a read-to-write ratio somewhere between 10:1 and 100:1. Users browse far more than they create. Dashboards query far more than they update. Search runs far more than indexing. This asymmetry is an architectural gift — it means you can scale reads and writes independently, using different strategies for each.

Read scaling is a well-solved problem. Read replicas, caching layers, CDN edge caching, materialized views — the tools are mature, the patterns are understood, and the failure modes are documented. A primary database with three read replicas behind a connection pooler like PgBouncer can handle dramatic read scaling without application code changes.

Write scaling is harder because writes must be consistent. You can't cache a write. You can't serve a write from a replica. Every write must reach the primary, and the primary's throughput is bounded by disk, network, and transaction isolation requirements. Write optimization — batching, async processing, eventual consistency where the domain allows it — is how most systems extend their write ceiling without sharding.

| Data Tier | Best For | Scaling Model | Typical Latency |

|---|---|---|---|

| Relational DB (Postgres, MySQL) | Structured data, transactions, complex queries | Vertical + read replicas | 1-10ms |

| Cache (Redis, Memcached) | Hot reads, session state, rate limiting | Horizontal (clustering) | < 1ms |

| Object Storage (S3) | Files, media, backups, data lake | Effectively infinite | 50-200ms |

| Search (Elasticsearch, Typesense) | Full-text search, faceted queries, log analytics | Horizontal (sharding) | 5-50ms |

| Vector Store (Pinecone, pgvector) | Embeddings, semantic search, AI retrieval | Horizontal (sharding) | 10-50ms |

| Message Queue (SQS, RabbitMQ) | Async processing, decoupling, job queues | Horizontal (partitioning) | 1-10ms |

The vector store row is new to this table, and that's the point. In 2024, vector stores were a novelty — something you'd use if you were building a chatbot or a recommendation engine. In 2026, they're becoming a standard data tier for any system that interacts with AI models. Retrieval-augmented generation (RAG) pipelines, semantic search, and embedding-based classification all depend on fast similarity search across high-dimensional vectors.

If you're building a system today that will interact with AI in any capacity — and increasingly, that's most systems — a vector store belongs in your data tier strategy from the start. Not because you need it on day one, but because retrofitting vector search into an existing architecture is the same pain as retrofitting full-text search was a decade ago. The teams that planned for it early built it into their data model. The teams that didn't spent six months bolting it on later.

The caching strategy that works at low traffic — cache everything, invalidate on write — usually breaks at high traffic because invalidation storms overwhelm the database. Cache with explicit TTLs and stagger expiration times to avoid thundering herds.

Security Is Not a Layer

I want to address a misconception that persists even among experienced architects: the idea that security is something you add to a system, like a coat of paint.

Security is a property of the architecture itself. It emerges from the decisions you make about trust boundaries, data flow, access patterns, and failure modes. You can't bolt it on any more than you can bolt structural integrity onto a building after the foundation is poured.

Zero-trust architecture makes this explicit. Instead of a hardened perimeter around a soft interior — the castle-and-moat model that dominated enterprise architecture for twenty years — zero trust assumes that every request, from every source, is potentially hostile. Every service authenticates every request. Every data access is authorized against a policy. Every network path is encrypted. The perimeter is everywhere.

This is more work upfront. But it means a compromised service can't pivot freely through the rest of your infrastructure. The blast radius of a breach is contained to what that service's credentials can access, not to everything inside the firewall.

AI-assisted development has become a genuine force multiplier for security — and simultaneously a new category of risk. The same tools that help you build faster can introduce vulnerabilities faster too, and that duality is something most security models haven't caught up with yet.

On the defense side, AI-powered security scanning is maturing rapidly. Tools like Claude Code Security don't just pattern-match against known CVEs — they read and reason about code the way a human security researcher would, tracing how data moves through your application, understanding how components interact, and catching vulnerabilities that static analysis misses. Every finding goes through multi-stage verification where the model re-examines its own results, attempting to disprove its findings and filter out false positives before anything reaches a human analyst. Nothing gets applied without human approval — the AI identifies problems and suggests patches, but developers make the call.

That's the promising side. Here's the uncomfortable one.

AI-generated code introduces a new attack surface that most security models don't account for. Code from AI assistants can include subtle vulnerabilities — insecure defaults, missing input validation, SQL injection through string interpolation — that look correct to a reviewer who trusts the tool. The defense is using AI security scanning to catch what AI code generation might introduce, while treating every AI-generated contribution with the same scrutiny you'd give a pull request from a new hire: verify, don't trust.

The teams getting this right are doing both simultaneously — using AI to write code faster and using AI to audit that code more thoroughly than a human review alone would catch. The tools that generate the vulnerability and the tools that find it are converging, and the architects who treat security as a continuous property of the system rather than a gate at the end of the pipeline are the ones sleeping soundly.

The same principle applies to AI agents and autonomous systems. An AI agent that can read your database, call external APIs, and execute code needs a permission model at least as rigorous as a human user's — arguably more rigorous, because it operates faster and without the judgment that makes a human pause before running DROP TABLE in production.

Principle of least privilege isn't new advice, but it's newly urgent. The systems we're building in 2026 have more autonomous components, more external integrations, and more surface area than anything we built five years ago. Every one of those components is a trust boundary, and every trust boundary is a security decision.

Performance Is a Feature

There's a framing of performance that treats it as a nice-to-have — something you optimize for after the features are built, if there's time left in the sprint. This framing is wrong and it's expensive.

Performance is a feature because users experience latency directly. A dashboard that loads in 200 milliseconds feels instant. The same dashboard at two seconds feels broken. The features are identical. The user experience is not.

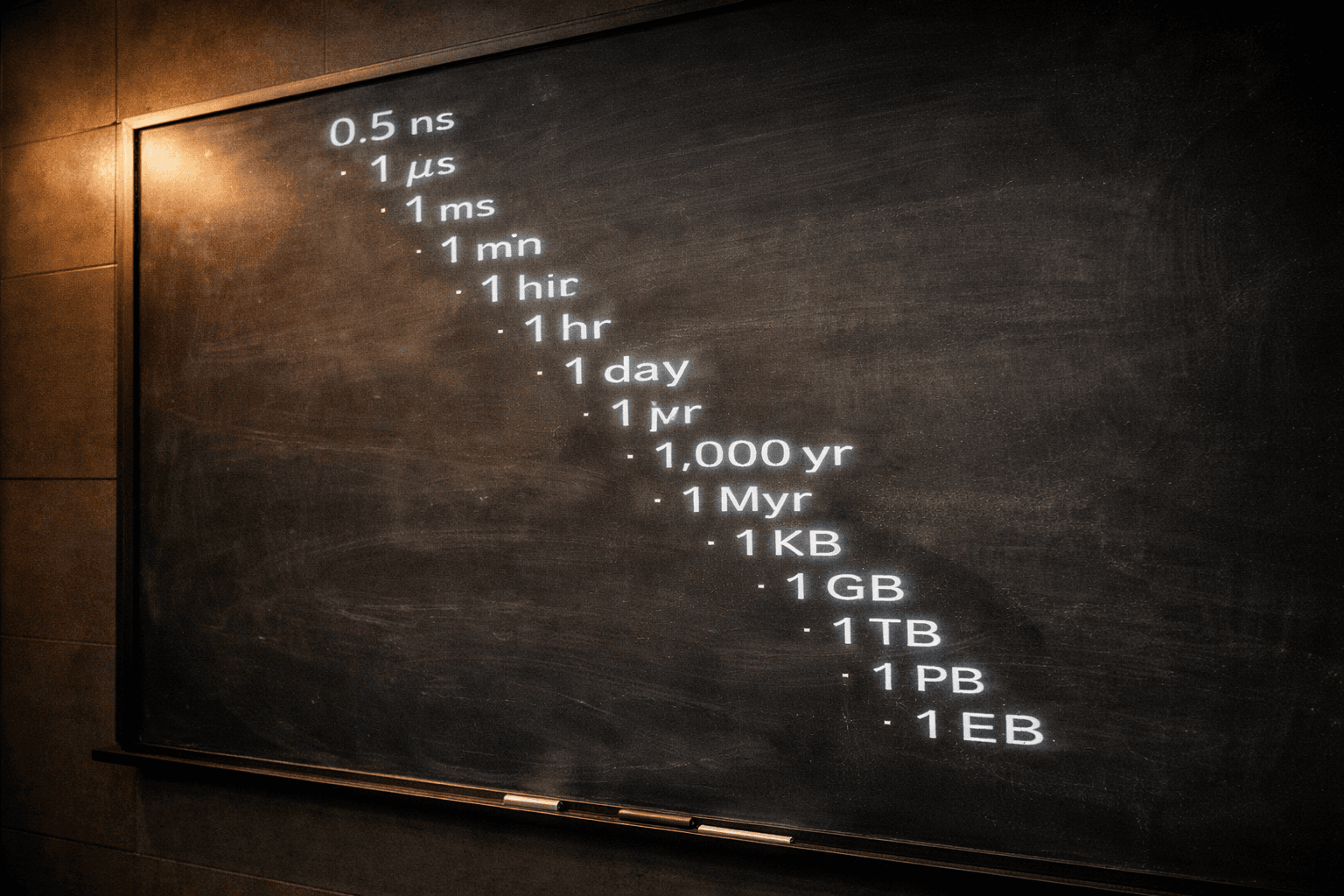

Latency budgets make this concrete. If your user-facing response time target is 200 milliseconds, and a request touches your CDN (5ms), load balancer (1ms), application (50ms), cache (1ms), and database (30ms), you've used about 87 milliseconds. That leaves 113 milliseconds of headroom for growth, new features, and the inevitable third-party API call that someone will add in six months.

Every network hop has a tax. Every new service in the request path adds latency. Every database query that could have been cached but wasn't is milliseconds you don't get back. These costs are individually small and collectively devastating — a system that adds one 10ms dependency per quarter is 40ms slower by year's end, and nobody can point to a single decision that caused it.

The discipline is simple: measure latency at every boundary, set budgets, and treat budget overruns like bugs. A P99 latency regression is a defect, not a performance consideration. If you wouldn't ship a feature with a broken validation rule, don't ship one with a 2x latency increase.

This is where architecture becomes invisible. Good performance decisions — caching strategies, connection pooling, query optimization, CDN configuration — don't announce themselves. Users never thank you for the page that loaded fast. They just use the product without friction, which is what they came to do.

Bad performance decisions announce themselves loudly, usually through support tickets, churn metrics, and the sinking feeling when you open the monitoring dashboard on Monday morning.

The architecture nobody notices is the architecture that works. If users are aware of your infrastructure decisions, something has gone wrong.

The best systems I've worked on shared a common quality: you could describe the entire architecture in five minutes on a whiteboard, and nothing in the description was surprising. Stateless services behind a load balancer. A relational database with read replicas. A cache layer for hot data. A queue for async work. A CDN for static assets.

The decisions were unremarkable. The system was reliable. The team spent their time building features rather than debugging infrastructure. Nobody wrote a blog post about it — until now, I suppose — because there was nothing exciting to write about.

That's the whole point. Architecture that works well is architecture that disappears. The scaling decisions that succeed are the ones nobody notices because the system just handles the load. The performance optimizations that matter are the ones users never think about because the page just loads.

The exciting architecture is the one that fails at 3 AM. The boring architecture is the one that lets you sleep. I know which one I'd rather build.

This is Part 1 of a series on solution architecture. In Part 2, we'll talk about what happens when things go wrong despite your best decisions — because they will — and the architectural discipline that determines whether a failure is a blip or a catastrophe. In later parts, we'll cover shipping discipline, the full scaling journey, the subsystems that hold it together, the numbers every architect should know, and a complete system design that ties it all together. The five forces don't get simpler as systems grow. But naming them helps.