There's a category of code that never reaches a user. No one sees it in a browser. No one interacts with it through an API. It runs in silence, in CI pipelines and local terminals, and its only purpose is to tell you when something broke. If it does its job perfectly, you never think about it. If it doesn't exist, you think about it constantly — usually at 2 AM, usually with a production incident channel filling up beside you.

Tests are the code you don't ship. And they might be the most important code you write.

The Lie We Tell Ourselves

Every developer who skips tests has a reason. The reasons are always rational, always practical, always wrong.

"I'll add tests later." You won't. You know you won't. The feature ships, the next ticket starts, and "later" becomes a permanent state. Six months from now, someone will change that untested function and break something in a way that takes three days to diagnose — because there's no test to tell them they broke it in three seconds.

"This code is simple enough that it doesn't need tests." The Ariane 5 rocket exploded forty seconds after launch because of a data conversion in code that was simple enough that it didn't need tests. A 64-bit floating-point number was converted to a 16-bit signed integer. The value overflowed. The guidance system shut down. The rocket self-destructed. Seven billion dollars, forty seconds, one "simple" conversion.

"We're moving too fast for tests." You're moving too fast without tests. Speed without confidence is just velocity in a random direction. You're shipping faster, but you're also debugging faster, rolling back faster, apologizing faster.

The excuse is always time. "We don't have time to write tests." But you always have time to debug production at midnight. You always have time for the three-day investigation into why the billing service is sending duplicate charges. You always have time for the postmortem. The time exists — you're just spending it on the wrong end of the problem.

What Tests Actually Protect

Here's what most people get wrong about testing: they think tests exist to verify that code works. That's true, but it's the least interesting thing tests do.

Tests protect your ability to change things.

A codebase without tests is a codebase that's afraid of itself. Every change is a gamble. Every refactor is a prayer. Engineers move slowly — not because they're cautious, but because they're terrified. They can't tell whether a modification three layers deep will cascade into something catastrophic. So they don't modify. They work around. They add another if-statement instead of restructuring. They copy-paste instead of extracting. The code calcifies. Every layer of avoidance becomes the next engineer's trap.

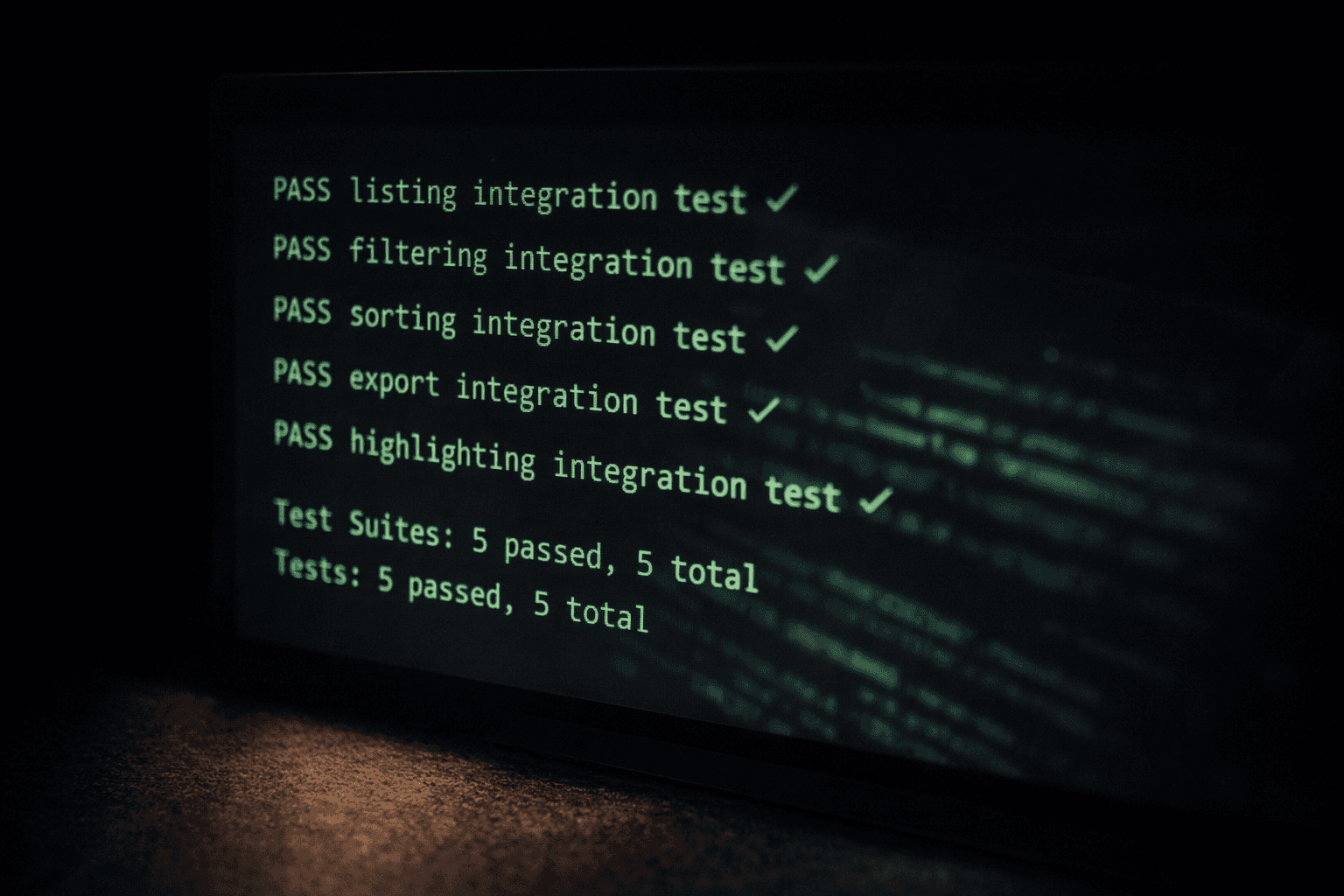

A codebase with good tests is a codebase that invites change. Rename a function, run the suite, see what breaks. Extract a module, run the suite, confirm nothing changed. Upgrade a dependency, run the suite, catch the incompatibility before your users do. The tests give you the one thing that untested code never can: courage.

Tests prove one thing above all: that your code can survive the next person who touches it.

And that next person might be you, six months from now, having forgotten every assumption you made today.

The Three AM Test

I have a mental model I use when deciding what to test. I call it the Three AM Test.

Imagine it's 3 AM. Your phone buzzes. Production is down. You open the incident channel and see the error trace. It points to a function you wrote — or worse, a function someone modified after you wrote it.

Now ask yourself: if this function had a test, would you have caught this before it shipped?

If the answer is yes, write the test. Right now. Before you do anything else.

The Three AM Test skips past coverage percentages and testing pyramids. It goes straight to consequence. What happens when this breaks? If the answer is "a user loses money," "a patient record gets corrupted," "the billing system double-charges ten thousand accounts" — then the absence of a test is just negligence dressed up as pragmatism.

Start with the code that scares you. The payment logic. The permission checks. The data migration. The state machine that handles order transitions. If you're nervous about touching it, that nervousness is your brain telling you a test should exist.

The Shape of Bad Tests

Writing tests is necessary. Writing bad tests is almost worse than writing none, because bad tests create a false sense of security and slow everything down without catching anything real.

I've seen enough bad test suites to recognize the patterns.

Tests that test the implementation, not the behavior. You refactor a function — same input, same output, different internal approach — and forty tests break. None of them caught an actual bug. They just asserted that the code was written a specific way. Now every refactor requires rewriting the test suite, and engineers stop refactoring.

Tests that mock everything. Your test creates a fake database, a fake HTTP client, a fake cache, and a fake queue, then asserts that the code calls them in the right order. Congratulations — you've tested that your mocks work. The real database has a different collation. The real HTTP client has a timeout you didn't simulate. The real cache evicts entries your mock doesn't. Everything passes in CI. Everything breaks in production.

Tests that are so slow nobody runs them. A test suite that takes twenty minutes is a test suite that runs in CI and nowhere else. Developers push code and context-switch while waiting. By the time the failure notification arrives, they've moved on to something else. Fast feedback loops are the entire point of testing. Kill the speed, kill the habit.

Tests with no assertions. I wish I were joking. I've reviewed test files where a function is called and the test passes because nothing threw an exception. The code could return absolute garbage and the test would be green. These are comfort blankets, not tests.

| Anti-Pattern | What Goes Wrong | The Fix |

|---|---|---|

| Testing implementation | Every refactor breaks tests | Assert on outputs and behavior, not internals |

| Mocking everything | Tests pass, production breaks | Use real dependencies where practical; mock only at system boundaries |

| Slow test suites | Developers stop running tests | Parallelize, use in-memory databases, split fast and slow suites |

| No meaningful assertions | False confidence | Every test should fail if the behavior it protects changes |

A green test suite that doesn't catch real bugs is more dangerous than no test suite at all. At least with no tests, you know you're flying blind. With bad tests, you think you can see — and that confidence leads you straight into the mountain.

The Culture Problem

Here's the uncomfortable part. Testing is, at its core, a culture problem — and no amount of tooling fixes a team that doesn't care.

In teams where tests are valued — where the senior engineers write tests, where code reviews flag missing tests, where the test suite is maintained like production code — everyone writes tests. Naturally. Without friction.

In teams where tests are an afterthought — where "we'll add tests later" is accepted, where test failures are ignored because "that test is flaky," where the test suite is a graveyard of skipped and broken assertions — nobody writes tests. Not because they can't. Because the environment has taught them it doesn't matter.

I once joined a team that had a test suite with 60% coverage. Sounds reasonable. Then I looked closer. Half the tests were disabled with skip annotations. A quarter of the remaining tests had no assertions — they just ran the code and checked it didn't throw. The actual meaningful test coverage was closer to 12%.

But the dashboard said 60%, so leadership believed the codebase was well-tested. Engineers knew the truth but had stopped pushing back. The gap between what the metrics said and what the code actually verified was a chasm — and every production bug fell into it.

The fix had nothing to do with tooling — it started with honesty. We deleted every test that didn't assert something meaningful. Coverage dropped to 15% overnight. Leadership panicked. But for the first time, the number was honest. And from that honest baseline, the team built a suite they actually trusted.

The cultural signals that shape testing habits are subtle but powerful:

- What gets celebrated? If shipping fast gets praised and writing tests gets silence, you've told your team what you value.

- What gets reviewed? If code reviews wave through changes without tests, you've set the standard.

- What gets fixed? If flaky tests stay flaky for weeks, you've told everyone the suite doesn't matter.

- Who writes tests? If only junior engineers write tests and senior engineers "move too fast," the hierarchy has spoken.

Tests as Documentation

There's a side benefit to good tests that doesn't get enough attention: they are the best documentation your codebase has.

Comments lie. They were true when written and never updated. READMEs drift. Wikis decay. Confluence pages from 2019 describe a system that no longer exists.

Tests are different. Tests run. If they don't match reality, they fail. A well-written test tells you exactly what a function is supposed to do, what inputs it expects, what outputs it produces, and what edge cases it handles. And unlike documentation, a test can't become silently outdated — the CI pipeline won't let it.

def test_transfer_fails_when_insufficient_balance():

account = Account(balance=100)

with pytest.raises(InsufficientFundsError):

account.transfer(amount=150, to=other_account)

assert account.balance == 100 # balance unchanged

assert other_account.balance == 0 # no partial transferThat test tells you more than any comment could. Transfers with insufficient balance raise a specific error. The source balance doesn't change. The destination doesn't receive a partial amount. And if any of that behavior changes, the test will scream.

When joining a new codebase, read the tests before reading the implementation. Tests reveal intent — what the code is supposed to do, what edge cases matter, what behavior someone thought was worth protecting. The "what" is almost always more valuable than the "how" when you're getting oriented.

The Right Amount

I don't believe in 100% code coverage. I've watched teams chase that number and end up testing getters, setters, and logging statements — generating noise that buries the signal.

I also don't believe in some magic coverage number that determines whether a codebase is "well-tested." Coverage is a trailing indicator. It tells you where tests exist, not whether they're good.

What I believe in is this: every behavior that matters should have a test that proves it works and will catch when it breaks.

That's it. No percentage. No metric. Just the question: if this breaks, will I know?

The payment flow should be tested obsessively. The admin color scheme can probably survive without a snapshot test. The algorithm that calculates drug interactions in a healthcare system — tested from every angle, with every edge case, reviewed by domain experts. The utility function that formats a phone number for display — a couple of examples and move on.

Test with intensity proportional to consequence. The code that handles money, health, access, and safety deserves more scrutiny than the code that formats a tooltip.

The Compound Effect

Here's the thing nobody tells you about test suites: they compound.

The first test you write feels pointless. One assertion against one function. It runs in milliseconds and catches nothing, because you just wrote the function and you know it works.

The fiftieth test catches a regression you introduced while refactoring something unrelated. You didn't know that function depended on a side effect in the module you cleaned up. The test knew.

The five hundredth test gives you the confidence to do a major version upgrade of your ORM, because you can run the suite and see exactly what behavior changed. Without those five hundred tests, that upgrade is a six-week project of manual verification. With them, it's an afternoon.

The ten thousandth test means a new engineer on the team can make a meaningful contribution in their first week, because the test suite catches their mistakes before anyone else has to. The onboarding cost drops. The review burden drops. The team velocity increases — genuinely increases, not the fake increase that comes from skipping quality.

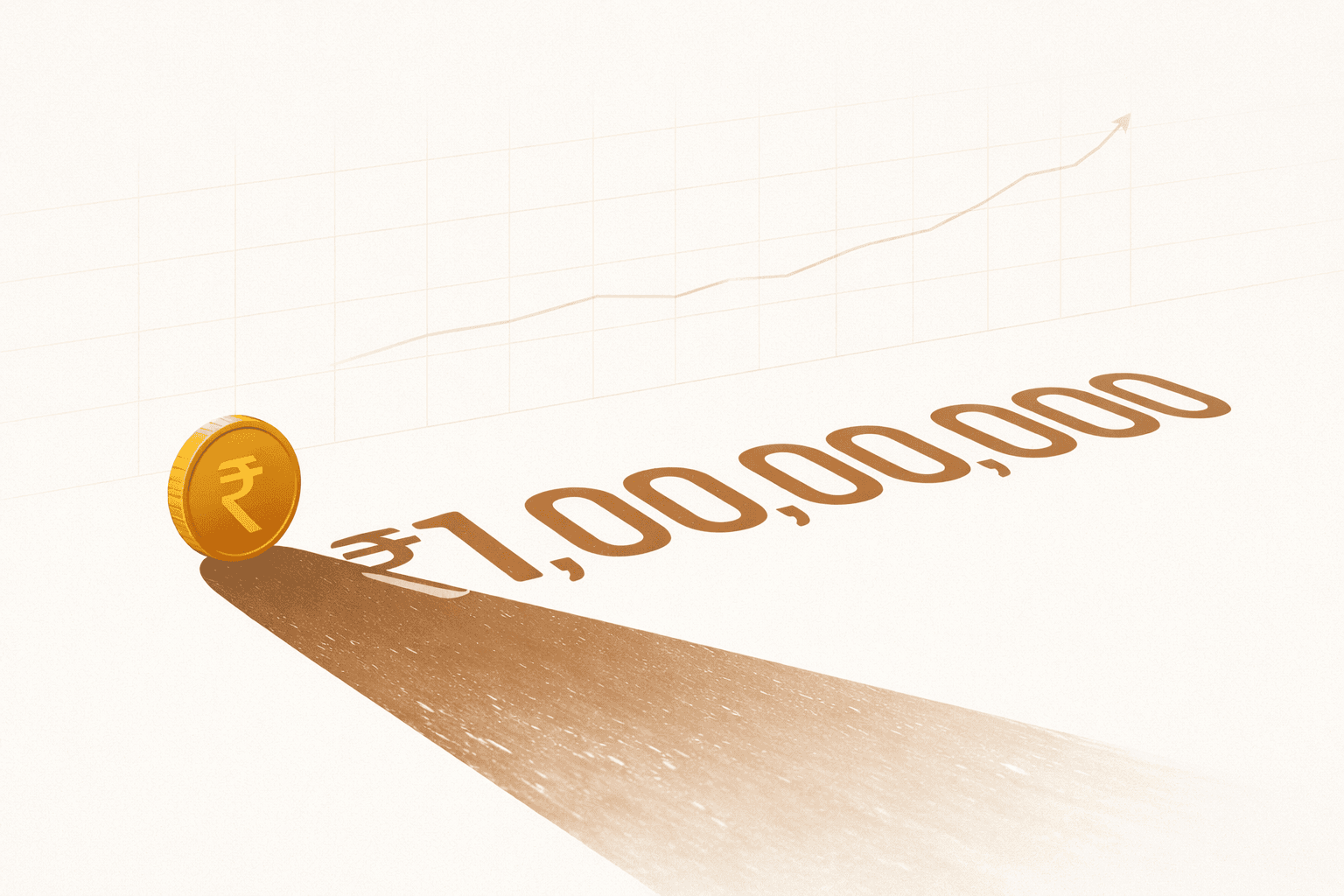

Tests are an investment with compounding returns. The ROI is invisible on day one and undeniable on day three hundred.

Write the Test

The best time to write a test was when you wrote the code. The second best time is right now.

Open your editor. Find the function that makes you nervous — the one you hope nobody touches, the one with the comment that says "HERE BE DRAGONS" or the one with no comments at all because whoever wrote it has already left the company.

Write one test. Just one. Assert that the function does what you think it does.

Then write another.

You're building something that future-you will be grateful for. Something that the engineer who inherits your code will silently thank you for at 3 AM when the pager goes off and the test suite narrows the problem to three lines in fifteen seconds.

That's the code you don't ship. And it might be the most generous thing you ever write.